Drone inspections and thermal imaging have transformed how solar parks are monitored. Large sites can now be scanned quickly, allowing operators to detect potential issues across thousands of modules in a short amount of time.

As inspection technology evolves, automated analysis tools are also becoming more common. These tools can help highlight possible anomalies in thermal imagery and speed up the review process.

However, in real-world solar operations, automated detection alone is rarely enough.

Human verification still plays an important role in ensuring that inspection results are accurate and useful.

Not every hotspot means the same thing

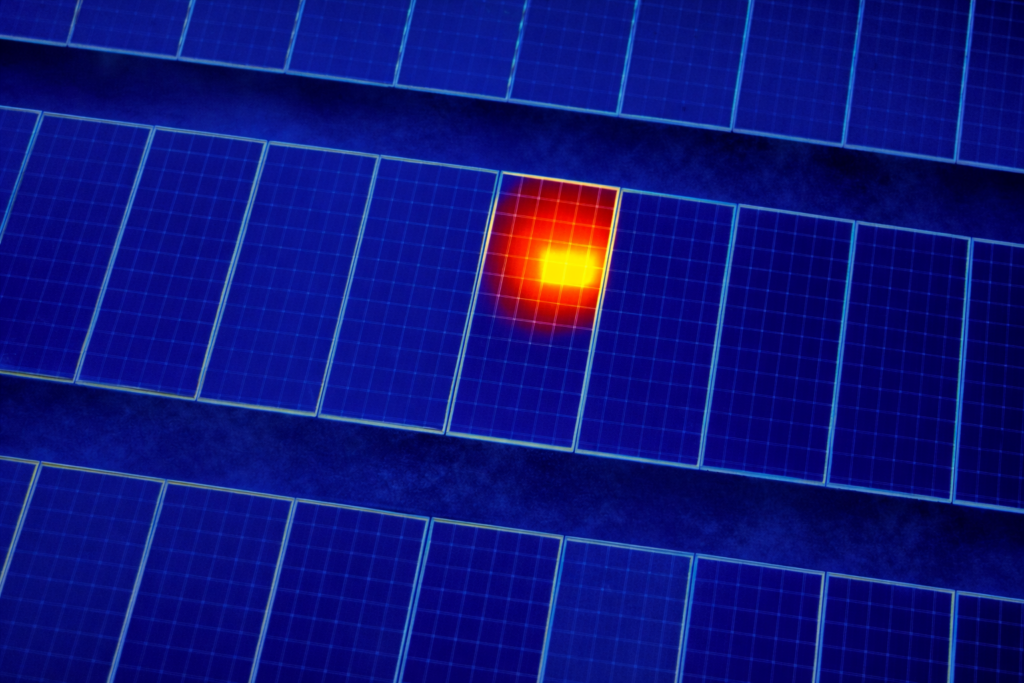

Thermal inspections often reveal hotspots or temperature differences across modules. While these signals can indicate faults, they do not always represent the same type of problem.

A thermal anomaly may be caused by several different factors, such as:

- a defective module

- an electrical connection issue

- temporary shading

- soiling patterns or environmental conditions

From a thermal image alone, it is not always possible to immediately determine the underlying cause.

This is why inspection results often require additional review before they can support maintenance decisions.

The limits of automated detection

Automated detection tools can be extremely helpful for identifying areas of interest within large datasets. They allow analysts to quickly scan thermal imagery and highlight modules that may require attention.

But automated systems can also generate false positives or misclassify certain patterns.

For example, temperature differences caused by dirt accumulation or partial shading may appear similar to electrical faults in thermal images. Without careful interpretation, these signals may lead to unnecessary maintenance actions.

In other cases, automated detection may miss subtle anomalies that require a closer look.

Because solar parks operate in diverse environmental conditions, inspection results must always be interpreted within the context of the site and that happens by human verification.

Why human verification improves reliability

Human verification adds an important layer of confidence to inspection results.

By reviewing anomalies manually, analysts can confirm whether the detected issue is likely to represent a real operational problem. This process helps filter out false positives and ensure that maintenance teams focus only on the most relevant findings.

Verification also helps improve the classification of anomalies. Instead of simply flagging a hotspot, inspection results can provide a clearer understanding of the likely issue affecting the module or component.

This additional clarity is valuable for maintenance planning and asset management.

Supporting operational decisions

For operators, the goal of an inspection is not simply to detect anomalies. It is to understand what actions should be taken to maintain performance.

Inspection workflows that combine automated detection with human verification can deliver more reliable and actionable results.

This approach allows teams to benefit from the speed of automated tools while maintaining the accuracy required for real-world operations.

The result is inspection data that is both efficient to produce and practical to use.

A step toward more autonomous systems

Automation will continue to improve as inspection datasets grow and analysis tools become more sophisticated.

In the future, higher levels of automation may become possible for identifying and classifying certain types of anomalies.

But today, hybrid workflows that combine automated analysis with human verification and expertise remain the most reliable approach for managing large solar assets.

By maintaining this balance, inspection processes can support both operational efficiency and long-term asset reliability.

Check our PV Evidence Pack to see our approach to solar parks inspection.

Drone inspections and thermal imaging have transformed how solar parks are monitored. Large sites can now be scanned quickly, allowing operators to detect potential issues across thousands of modules in a short amount of time, but humans are still essential to ensure the best results. The challenge usually appears afterwards, when teams try to interpret the results and translate them into maintenance decisions.

Robivon – Engineering the transition from inspection to autonomous infrastructures